Guide

AI Development Company: How to Choose Right

Everyone wants AI now. The boardroom heard about it, the competitors claim they’re using it, and suddenly you need an ai development company yesterday.

Here’s the problem: the market is flooded with firms that slapped “AI” onto their existing dev shop landing page sometime around 2023. Some are genuinely brilliant. Others will burn through your budget building a demo that never makes it to production.

Choosing the wrong AI partner doesn’t just waste money—it poisons your organisation’s trust in AI altogether. We’ve seen it happen. A failed pilot becomes “we tried AI and it didn’t work,” when the real issue was the wrong team, wrong scope, or wrong approach entirely.

This guide helps you tell the difference.

What Does an AI Development Company Actually Do?

An ai development company builds intelligent systems that learn from data, make predictions, or automate decisions that previously required human judgement.

That sounds simple. It isn’t.

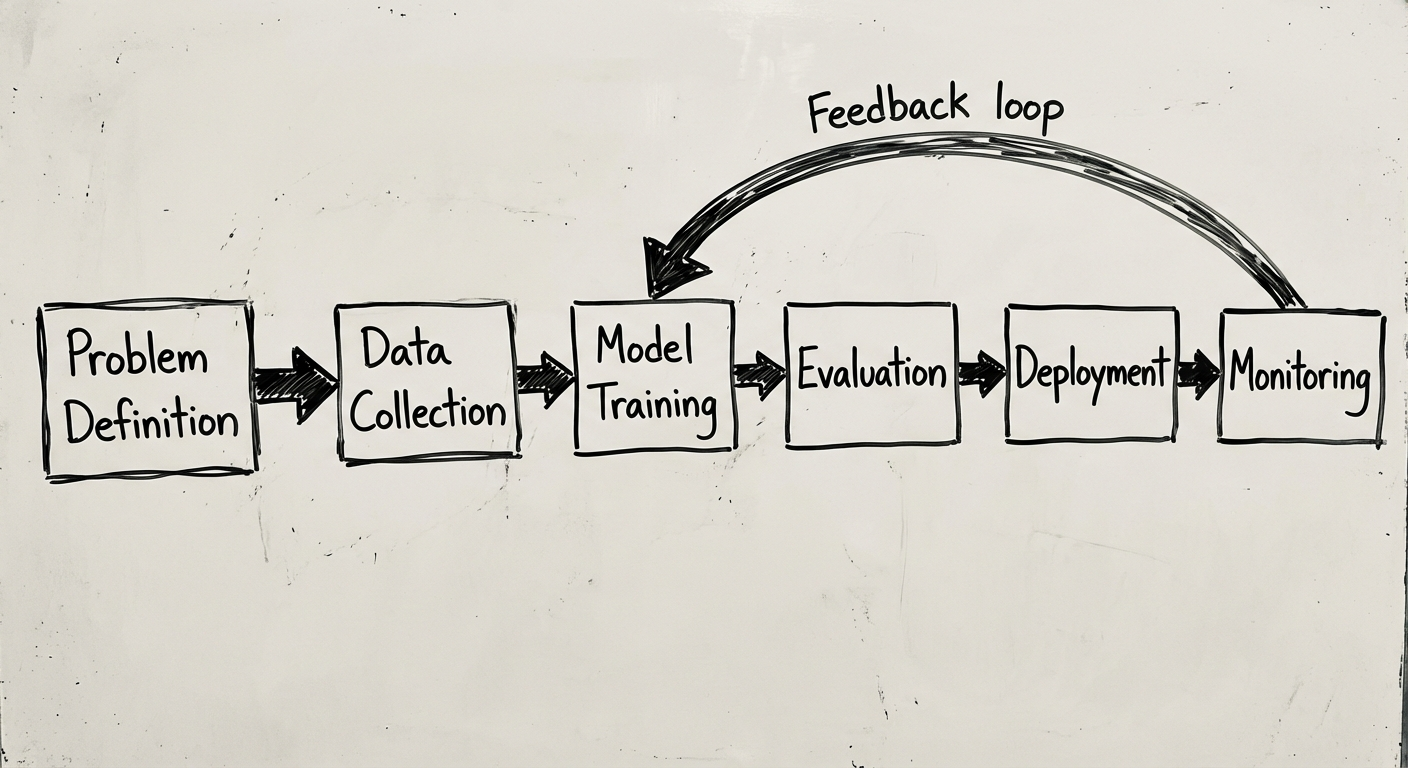

Unlike traditional software development—where you define inputs, logic, and outputs upfront—AI systems are probabilistic. They deal in confidence scores, training data, and model accuracy. The development process looks fundamentally different:

- Data assessment and preparation — Often 60-80% of the project timeline

- Model selection and training — Choosing the right algorithm for the problem

- Integration engineering — Connecting AI outputs to your existing systems

- Monitoring and retraining — Models degrade over time as data patterns shift

- MLOps infrastructure — The plumbing that keeps models running in production

A good AI partner handles all of this. A mediocre one builds a notebook in Jupyter and calls it a day.

If you’re also evaluating broader software partners, our custom software development guide covers the fundamentals of choosing any development partner.

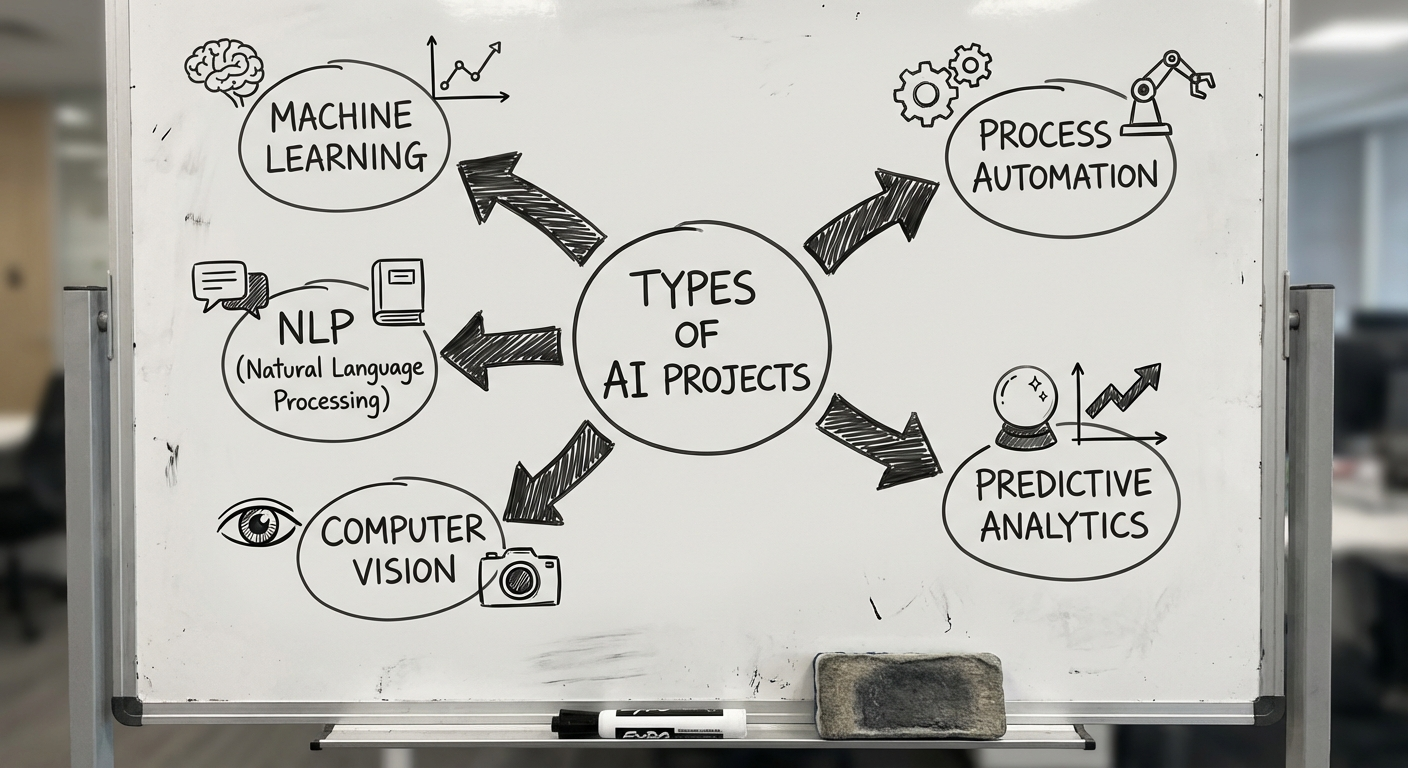

Types of AI Projects

Not all AI is created equal. Understanding the category helps you evaluate whether a company has relevant experience.

Machine Learning Models

The bread and butter. Predictive models that learn patterns from historical data: demand forecasting, churn prediction, credit scoring, recommendation engines. These require clean data pipelines and solid statistical foundations.

Natural Language Processing (NLP)

Making machines understand and generate human language. Chatbots, document classification, sentiment analysis, summarisation. Post-GPT, the landscape has shifted dramatically—many NLP tasks that required custom models can now be solved with well-orchestrated LLM APIs.

Computer Vision

Teaching systems to interpret images and video. Quality inspection on manufacturing lines, medical imaging analysis, security monitoring, OCR for document processing. These projects are hardware-adjacent and often require edge deployment.

Process Automation (Intelligent RPA)

Going beyond simple rule-based automation. AI-powered automation handles the messy cases that traditional RPA chokes on—invoices with inconsistent formats, emails that need judgement calls, data entry from unstructured sources.

AI Integration & Augmentation

Not building models from scratch, but integrating existing AI capabilities (OpenAI, Google Cloud AI, AWS Bedrock) into your products and workflows. This is increasingly the smart play—using frontier models as building blocks rather than reinventing them.

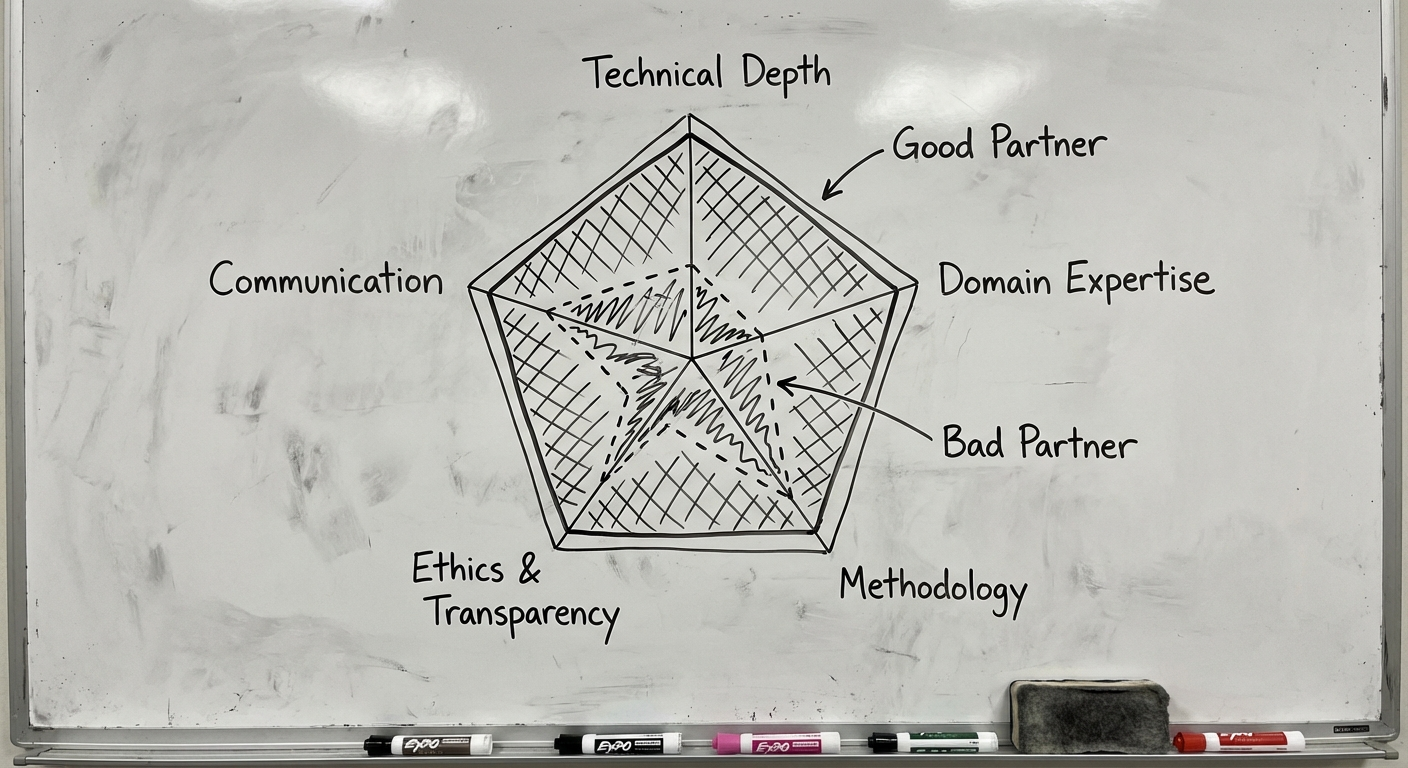

How to Evaluate an AI Development Partner

This is where most buyers get it wrong. They evaluate AI companies the same way they’d evaluate a web agency. The criteria are different.

1. Portfolio: Production, Not Prototypes

Ask specifically about models that went to production and stayed there. Anyone can build a demo. The hard part is deploying a model that handles real-world data, edge cases, and scale.

Questions to ask:

- “How many of your AI projects are currently running in production?”

- “What’s the longest a client has maintained one of your models?”

- “Can you show me before/after metrics from a deployed system?”

If they can only show you Kaggle competitions and proof-of-concepts, keep looking.

2. Team Composition

A real AI team isn’t just data scientists. Look for:

- ML Engineers — Bridge the gap between research and production

- Data Engineers — Build the pipelines that feed models

- Domain Specialists — Understand your industry’s data patterns

- DevOps/MLOps — Keep models running, monitored, and updated

If a company can’t explain who on their team handles MLOps, they probably don’t have anyone. And that means your model dies the day after deployment.

3. Methodology: How They Handle Uncertainty

AI projects carry more uncertainty than traditional software. Good partners acknowledge this with:

- Feasibility phases before committing to full builds

- Clear success metrics defined upfront (accuracy thresholds, latency requirements)

- Iterative development with regular model performance reviews

- Honest communication when the data doesn’t support the desired outcome

Bad partners promise 99% accuracy in the proposal.

4. Ethics and Data Governance

This matters more than most buyers realise. Ask about:

- Bias testing — How do they detect and mitigate model bias?

- Data privacy — Where does your data live during training? Who has access?

- Explainability — Can the model’s decisions be explained to regulators or customers?

- Compliance — Are they familiar with your industry’s regulatory requirements?

If they look confused when you ask about bias testing, that tells you everything.

Red Flags: When to Walk Away

Years of working in this space have taught us the warning signs. Here’s what should make you nervous:

🚩 “We can do any AI project”

Genuine AI expertise is specialised. A company that claims equal competence in computer vision, NLP, reinforcement learning, and robotics is either massive (think Google) or lying. Good firms know what they’re great at and refer out the rest.

🚩 No discussion of data quality

If a company jumps straight to model architecture without asking about your data, they’re building a house on sand. Data quality determines 80% of AI project success. Any partner who skips this conversation is optimising for the sale, not the outcome.

🚩 Proprietary lock-in

Watch out for companies that:

- Won’t give you access to your own model weights

- Build on proprietary frameworks you can’t maintain without them

- Don’t provide documentation or knowledge transfer

- Make it contractually difficult to leave

🚩 No MLOps story

“We build it, you maintain it” is a red flag when the company hasn’t invested in MLOps. Models need monitoring, retraining triggers, data drift detection. If there’s no plan for Day 2, you’re buying a depreciating asset.

🚩 Buzzword density exceeds substance

If the proposal mentions “revolutionary,” “disruptive,” and “cutting-edge” more often than specific methodologies, accuracy targets, and data requirements—it’s marketing, not engineering.

Build In-House vs. Outsource AI

This is the classic question, and the answer is—predictably—“it depends.”

Build In-House When:

- AI is core to your product — If AI IS what you sell, you need internal capability

- You have proprietary data advantages — Sensitive data that can’t leave your walls

- You’re playing the long game — Building a 5-year competitive moat

- You can attract talent — And you can afford to. Senior ML engineers command $200-400K+ in markets like Australia

Outsource When:

- You need to validate quickly — Test an AI hypothesis in 8-12 weeks, not 12 months

- The project is bounded — Clear scope, defined outcome, specific timeline

- You lack internal expertise — And building a team isn’t justified for one project

- You want production quality fast — An experienced partner has the MLOps infrastructure already

The Hybrid Approach

The smartest companies often do both. They outsource the initial build and validation, then gradually bring capability in-house as the AI becomes core. Your outsource partner becomes a strategic advisor rather than a full-time builder.

This is actually how we approach development at Synetica—building real capability, not dependency.

Synetica’s Approach to AI Development

We should be transparent about our own position here, since we are, in fact, an ai development company.

Our philosophy is simple: practical AI, not hype.

Here’s what that means concretely:

We Start with the Business Problem

Not the technology. We’ve turned away projects where a well-designed dashboard would solve the problem better than a machine learning model. If your problem doesn’t need AI, we’ll tell you. We’d rather build something useful than something impressive.

We Validate Before We Build

Every AI engagement starts with a feasibility phase. We assess your data, define success metrics, and build a minimal proof-of-concept before anyone commits to a full build. If the data doesn’t support the outcome, we say so early—when it’s cheap to pivot.

We Build for Production, Not Demos

Our engineering team includes ML engineers and DevOps specialists who build deployment pipelines, monitoring systems, and retraining workflows from day one. The model isn’t done when it hits 90% accuracy in a notebook. It’s done when it’s running reliably in production and delivering measurable business value.

We Transfer Knowledge

We don’t want you dependent on us forever. Every project includes documentation, knowledge transfer sessions, and—where appropriate—training for your internal team to maintain and extend the system.

What Does AI Development Cost?

Let’s talk numbers. These are realistic ranges based on market rates in Australia and our own project experience.

| Project Type | Typical Range (AUD) | Timeline |

|---|---|---|

| Feasibility Study / POC | $15,000 - $40,000 | 2-4 weeks |

| MVP / Pilot Deployment | $50,000 - $150,000 | 6-12 weeks |

| Production AI System | $150,000 - $500,000+ | 3-9 months |

| Ongoing MLOps & Maintenance | $3,000 - $15,000/month | Continuous |

What Drives Costs Up

- Data preparation complexity — Messy, siloed, or insufficient data

- Custom model training — vs. fine-tuning or using existing APIs

- Integration complexity — Legacy systems, real-time requirements

- Compliance requirements — Healthcare, finance, government

- Scale — Processing millions of records vs. thousands

What Keeps Costs Down

- Clean, well-structured data — This alone can cut timelines by 40%

- Using existing AI APIs where appropriate (OpenAI, Google, AWS)

- Clear, bounded scope — “Predict X for Y” beats “make us AI-powered”

- Phased approach — Validate before scaling

The most expensive AI project is the one that fails after six months because nobody validated the data first. A $20K feasibility study that kills a bad idea saves $200K in wasted development.

Making Your Decision

Choosing an ai development company comes down to three questions:

- Do they understand your problem before proposing a solution? If the first meeting is all about their technology stack, be cautious.

- Can they show production results, not just demos? Demos are easy. Production is hard.

- Do they have a plan for after launch? Models need care. Partners who disappear after deployment aren’t partners.

The AI space will keep evolving. Models will get more capable. Costs will come down. But the fundamentals of choosing a good engineering partner haven’t changed: look for competence, honesty, and a genuine interest in solving your problem.

Ready to Talk?

We don’t do sales pitches. We do working sessions.

Tell us what you’re trying to solve, and we’ll be honest about whether AI is the right approach—and whether we’re the right team.

Need help putting this into practice?

Book a Blueprint session and we'll turn the ideas in this article into your next validated release.

Book a Discovery Call